PeekingDuck v1.3: New GUI with Segmentation and Optimised Models

The PeekingDuck team has just released v1.3! New features include:

- Instance segmentation model nodes, which can be applied to use cases such as privacy masking,

- An image undistortion node to correct distorted images from wide-angle cameras,

- A nifty PeekingDuck Viewer GUI to help with demos and analysis of inference results, and

- Optimised TensorRT models which can run up to 50% faster on NVIDIA GPUs.

Lastly, we’ve created a 30-second introduction video for PeekingDuck which captures its essence – low code and flexible. We hope you can share this video if you have been using PeekingDuck and find it useful!

Instance Segmentation Models

PeekingDuck currently offers different object detection, pose estimation, object tracking and crowd counting nodes. In this release, we are introducing a new category of instance segmentation models to PeekingDuck with these nodes – model.mask_rcnn and model.yolact_edge. More details on model accuracies and FPS benchmarks can be found here: https://peekingduck.readthedocs.io/en/stable/resources/01e_instance_segmentation.html

What is instance segmentation? In object detection, the model predicts the coordinates of a bounding box around each unique object. Instance segmentation takes this one step further by assigning a label to each pixel of each unique object, creating “masks”. Masks provide information about the shape of objects, which could be useful in scenarios such as the detection of tumours or sensor fusion with 3D sensors in autonomous vehicles. The GIF below shows a privacy protection use case using PeekingDuck’s new nodes – the detected masks of people and computer screens are blurred out to protect their privacy.

Privacy protection using instance segmentation

Image Undistortion

Some cameras, such as CCTVs, may be wide-angled, leading to distortion in produced images where the entire image appears to bow outward from the middle. In our experience, we find that this reduces the accuracy of CV models in some cases, and thus we have created the augment.undistort node that removes radial and tangential distortion from such images. The image below shows an image from a wide-angle CCTV on the left and an undistorted image after using this node on the right.

More details about using this node can be found here: https://peekingduck.readthedocs.io/en/stable/nodes/augment.undistort.html#module-augment.undistort

Before undistortion (left) and after undistortion (right)

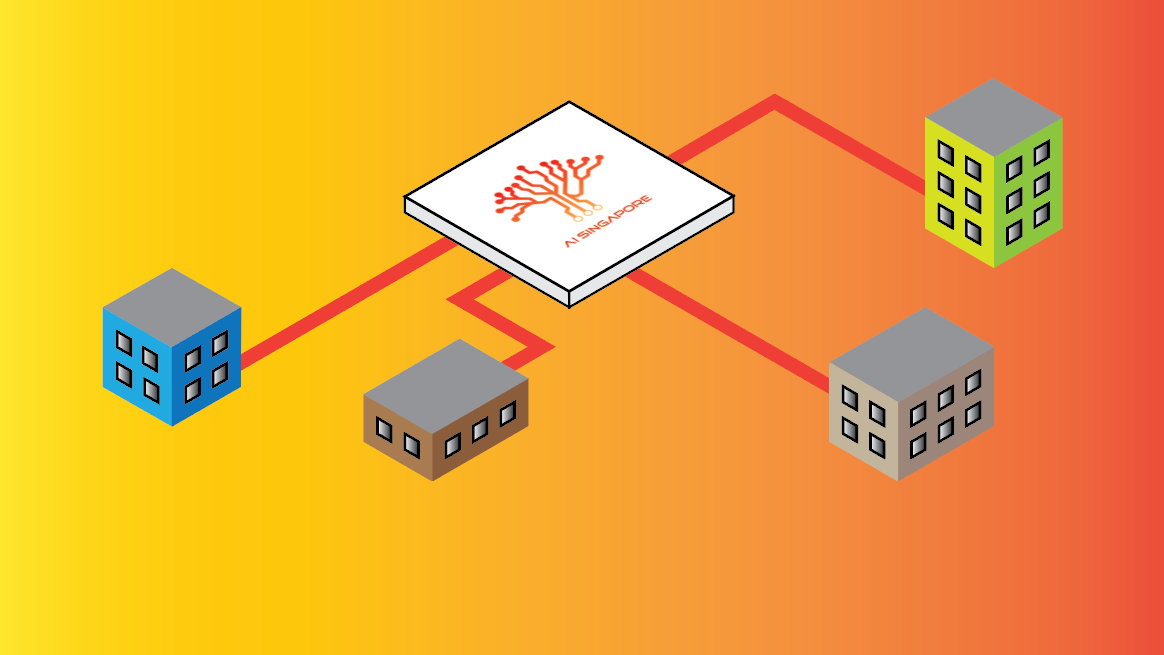

PeekingDuck Viewer

We are excited to introduce PeekingDuck Viewer, an interactive GUI application packaged within PeekingDuck that does not require additional dependencies and can be launched with the peekingduck run –viewer command. It can do the following:

- Play, stop or scrub through frames of the output video – helpful in analysing the model predictions, e.g. “Why wasn’t the car detected between frames 456 to 481?

- Manage and run PeekingDuck pipelines – add different pipelines to the playlist just like adding tracks to a Spotify playlist, e.g. one for object detection of cats, and another for human pose estimation. This is really helpful for demos and showcases.

PeekingDuck Viewer

Optimised TensorRT Models

PeekingDuck now supports running optimised TensorRT models on devices with NVIDIA GPUs. We have added TensorRT versions of our model.yolox and model.movenet nodes in addition to the existing TensorFlow/PyTorch versions, which provide up to a 50% speed boost with similar accuracies! The plot below shows the performance improvement of the TensorRT MoveNet model over the regular TensorFlow variant on an NVIDIA Jetson Xavier NX with 8GB RAM.

These optimised models are suitable for AI inference on edge, and more information on how to use them can be found here: https://peekingduck.readthedocs.io/en/stable/edge_ai/index.html

FPS benchmarks for MoveNet model

Additional Notable Features

Here is a high-level list of other notable features:

- A tutorial on how to use your custom model has been created, as requested by our users, and can be found here: https://peekingduck.readthedocs.io/en/stable/tutorials/06_using_your_own_models.html

- Only the weights of the chosen model type are downloaded when run for the first time, instead of downloading for all model types. For example, for model.movenet, if the multipose lighting model type is used, only its weights are downloaded, and not the weights for singlepose lighting nor singlepose thunder. This helps reduce start-up time and saves storage space.

- draw.legend font size and thickness can be adjusted to suit different video resolutions.

- Other optimisations and bug fixes. More details can be found in our v1.3.0 changelog: https://github.com/aisingapore/PeekingDuck/releases/tag/1.3.0

Find Out More

To start using PeekingDuck and find out more about our updated features, check out our documentation below:

- GitHub repository: https://github.com/aisingapore/PeekingDuck

- Read the Docs: https://peekingduck.readthedocs.io/en/stable/